Questions

2025FallB-X-CSE571-78760 模块 7: 顺序决策 知识检查 Module 7: Sequential Decision-Making Knowledge Check

Numerical

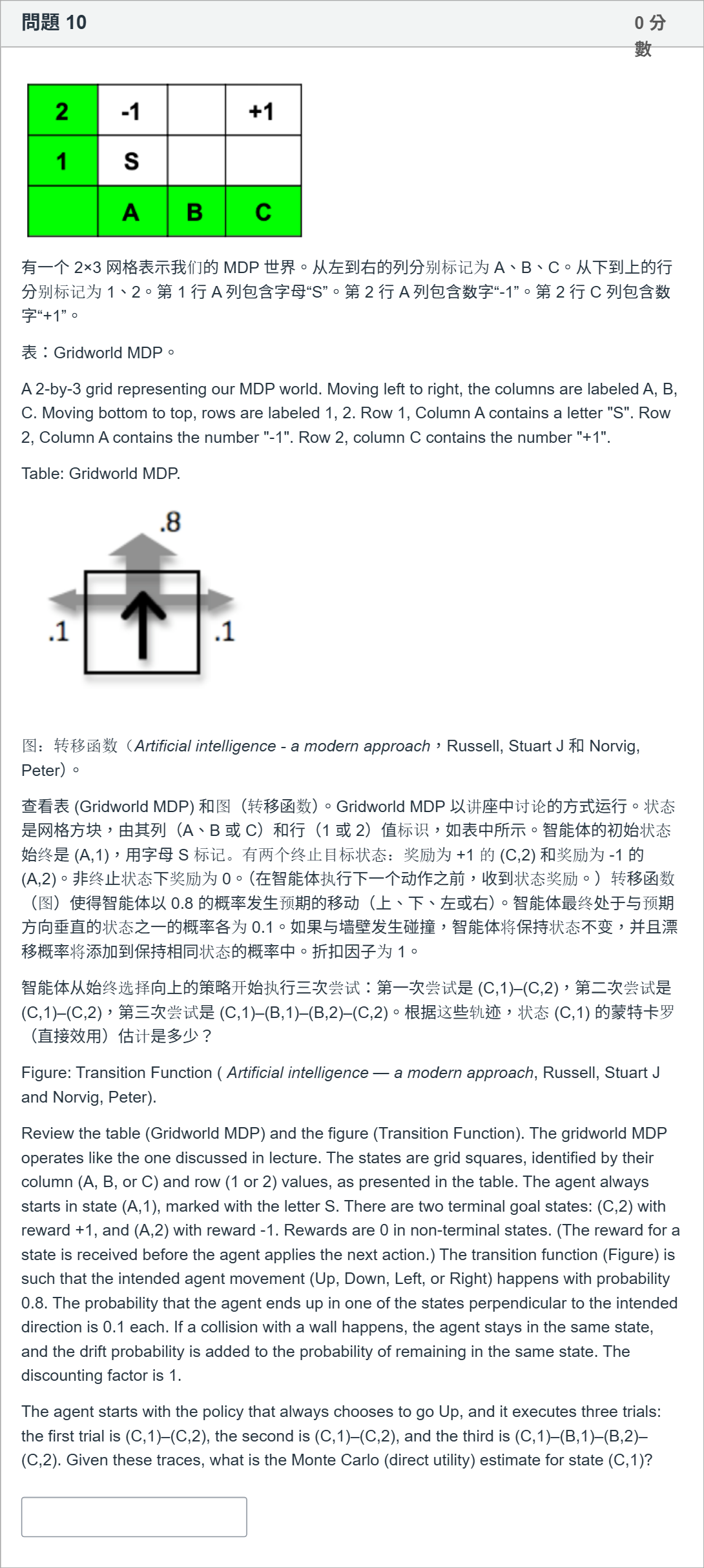

有一个 2×3 网格表示我们的 MDP 世界。从左到右的列分别标记为 A、B、C。从下到上的行分别标记为 1、2。第 1 行 A 列包含字母“S”。第 2 行 A 列包含数字“-1”。第 2 行 C 列包含数字“+1”。 表:Gridworld MDP。 A 2-by-3 grid representing our MDP world. Moving left to right, the columns are labeled A, B, C. Moving bottom to top, rows are labeled 1, 2. Row 1, Column A contains a letter "S". Row 2, Column A contains the number "-1". Row 2, column C contains the number "+1". Table: Gridworld MDP. 图:转移函数(Artificial intelligence - a modern approach,Russell, Stuart J 和 Norvig, Peter)。 查看表 (Gridworld MDP) 和图(转移函数)。Gridworld MDP 以讲座中讨论的方式运行。状态是网格方块,由其列(A、B 或 C)和行(1 或 2)值标识,如表中所示。智能体的初始状态始终是 (A,1),用字母 S 标记。有两个终止目标状态:奖励为 +1 的 (C,2) 和奖励为 -1 的 (A,2)。非终止状态下奖励为 0。(在智能体执行下一个动作之前,收到状态奖励。)转移函数(图)使得智能体以 0.8 的概率发生预期的移动(上、下、左或右)。智能体最终处于与预期方向垂直的状态之一的概率各为 0.1。如果与墙壁发生碰撞,智能体将保持状态不变,并且漂移概率将添加到保持相同状态的概率中。折扣因子为 1。 智能体从始终选择向上的策略开始执行三次尝试:第一次尝试是 (C,1)–(C,2),第二次尝试是 (C,1)–(C,2),第三次尝试是 (C,1)–(B,1)–(B,2)–(C,2)。根据这些轨迹,状态 (C,1) 的蒙特卡罗(直接效用)估计是多少? Figure: Transition Function ( Artificial intelligence — a modern approach, Russell, Stuart J and Norvig, Peter). Review the table (Gridworld MDP) and the figure (Transition Function). The gridworld MDP operates like the one discussed in lecture. The states are grid squares, identified by their column (A, B, or C) and row (1 or 2) values, as presented in the table. The agent always starts in state (A,1), marked with the letter S. There are two terminal goal states: (C,2) with reward +1, and (A,2) with reward -1. Rewards are 0 in non-terminal states. (The reward for a state is received before the agent applies the next action.) The transition function (Figure) is such that the intended agent movement (Up, Down, Left, or Right) happens with probability 0.8. The probability that the agent ends up in one of the states perpendicular to the intended direction is 0.1 each. If a collision with a wall happens, the agent stays in the same state, and the drift probability is added to the probability of remaining in the same state. The discounting factor is 1. The agent starts with the policy that always chooses to go Up, and it executes three trials: the first trial is (C,1)–(C,2), the second is (C,1)–(C,2), and the third is (C,1)–(B,1)–(B,2)–(C,2). Given these traces, what is the Monte Carlo (direct utility) estimate for state (C,1)?

View Explanation

Verified Answer

Please login to view

Step-by-Step Analysis

The question describes a Gridworld MDP and provides three complete traces starting from state (C,1). We are to compute the Monte Carlo (direct utility) estimate for (C,1) based on these traces, with a discount factor of 1.

First, restating the scenario: the agent always starts in (A,1) with S, but the traces given ......Login to view full explanationLog in for full answers

We've collected over 50,000 authentic exam questions and detailed explanations from around the globe. Log in now and get instant access to the answers!

Similar Questions

Shown is the Q Actor-Critic (QAC) function, with line numbers. 1. Initialise 𝑠 , 𝜃 2. Sample 𝑎 ∼ 𝜋 𝜃 3. for each step do 4. Sample reward 𝑟 = 𝑅 𝑠 𝑎 ; sample transition 𝑠 ′ ∼ 𝑃 𝑠 , ⋅ 𝑎 5. Sample action 𝑎 ′ ∼ 𝜋 𝜃 ( 𝑠 ′ , 𝑎 ′ ) 6. 𝛿 = 𝑟 + 𝛾 𝑄 𝑤 ( 𝑠 ′ , 𝑎 ′ ) − 𝑄 𝑤 ( 𝑠 , 𝑎 ) 7. 𝜃 ← 𝜃 + 𝛼 ∇ 𝜃 𝑙 𝑜 𝑔 𝜋 𝜃 ( 𝑠 , 𝑎 ) 𝑄 𝑤 ( 𝑠 , 𝑎 ) 8. 𝑤 ← 𝑤 + 𝛽 𝛿 𝜙 ( 𝑠 , 𝑎 ) 9. 𝑎 ← 𝑎 ′ , 𝑠 ← 𝑠 ′ 10. end for Which of the following statements is true (can be more than one)?

The value of an action 𝑞 𝜋 ( 𝑠 , 𝑎 ) depends on the expected next reward and the expected value of the next state. We can think of this in terms of a small backup diagram, as follows: Let 𝑃 ( 𝑠 ′ | 𝑠 , 𝑎 ) be the transition probability and 𝑟 ¯ ( 𝑠 , 𝑎 , 𝑠 ′ ) = 𝐸 [ 𝑅 𝑡 + 1 | 𝑆 𝑡 = 𝑠 , 𝐴 𝑡 = 𝑎 , 𝑆 𝑡 + 1 = 𝑠 ′ ] the expected reward for the transion from state 𝑠 to state 𝑠 ′ via action 𝑎 . Rearrange the definition of 𝑞 𝜋 ( 𝑠 , 𝑎 ) in terms of these quantities, such that no expected-value notation appears in the equation. A. 𝑞 𝜋 ( 𝑠 , 𝑎 ) = ∑ 𝑠 ′ 𝑃 ( 𝑠 ′ ∣ 𝑠 , 𝑎 ) [ 𝑟 ¯ ( 𝑠 , 𝑎 , 𝑠 ′ ) + 𝛾 𝑞 𝜋 ( 𝑠 ′ , 𝑎 ) ] B. 𝑞 𝜋 ( 𝑠 , 𝑎 ) = ∑ 𝑠 ′ [ 𝑟 ¯ ( 𝑠 , 𝑎 , 𝑠 ′ ) + 𝛾 ] 𝑃 ( 𝑠 ′ ∣ 𝑠 , 𝑎 ) 𝑣 𝜋 ( 𝑠 ′ ) C. 𝑞 𝜋 ( 𝑠 , 𝑎 ) = ∑ 𝑠 ′ 𝑃 ( 𝑠 ′ | 𝑠 , 𝑎 ) [ 𝑟 ¯ ( 𝑠 , 𝑎 , 𝑠 ′ ) + 𝛾 𝑣 𝜋 ( 𝑠 ′ ) ] D. 𝑞 𝜋 ( 𝑠 , 𝑎 ) = 𝑃 [ 𝑠 ′ ∣ 𝑠 , 𝑎 ] [ 𝑟 ¯ ( 𝑠 , 𝑎 , 𝑠 ′ ) + 𝛾 𝑣 𝜋 ( 𝑠 ′ ) ]

Which statement best describes the difference between SARSA and Q-learning?

Which of the following best describes a key difference between Monte Carlo and Temporal-Difference (TD) learning?

More Practical Tools for Students Powered by AI Study Helper

Making Your Study Simpler

Join us and instantly unlock extensive past papers & exclusive solutions to get a head start on your studies!